Data Integration: RM/COBOL to AWS with AI-Enhanced Analytics Pipeline

Healthcare Technology Organization

Overview

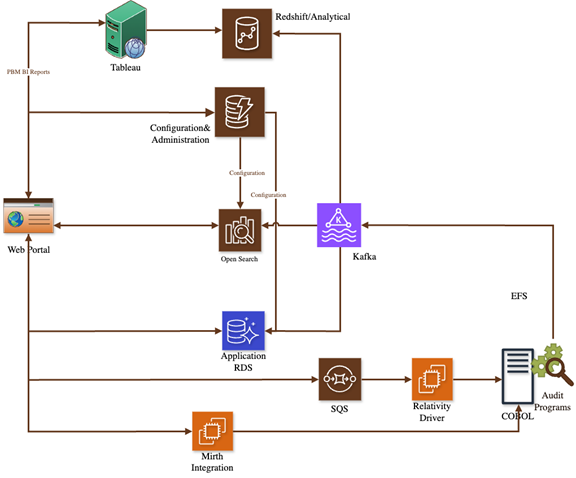

Built a near-realtime data integration platform synchronizing legacy RM/COBOL transactions into AWS — feeding operational databases (RDS), analytical warehouses (Redshift), and AI-powered search indexes (OpenSearch). The pipeline maintains strict transaction ordering from the COBOL system while enabling modern analytics, predictive insights, and full-text search capabilities.

Client Profile

The Challenge

Synchronizing COBOL transactions into AWS in near-realtime

Maintaining strict ordering (same sequence as committed in COBOL)

Delivering data to RDS, Redshift, and OpenSearch simultaneously

Enabling AI-powered analytics on previously inaccessible legacy data

Solution Architecture

Mirth Integration Hub: Canonical ingestion point validating, normalizing, and routing claim transactions.

COBOL Change Capture: Audit programs producing auditable change records into EFS-backed landing zone.

Relativity Driver (Orchestration/CDC): SQS-based decoupling for retries and backpressure.

Kafka Streaming Backbone: Source of truth for fan-out to operational, analytical, and AI-powered search destinations.

Multi-Destination Delivery: Single source (Kafka) fanning out to RDS (operational), Redshift (analytics), OpenSearch (AI-powered search).

Idempotent & Retry-Safe Processing: Handles partial failures and replay without duplicates.

Architecture Diagram — Data Integration: RM/COBOL to AWS

Features & Capabilities

Near-Realtime Synchronization

Sub-minute latency between COBOL transaction commit and downstream availability

Strict Transaction Ordering

Events processed exactly as committed in COBOL system

AI-Enhanced Search & Discovery

OpenSearch powering intelligent full-text search, filtering, and pattern detection

Multi-Destination Delivery

Kafka fan-out to Operational DB, Analytics Warehouse, and AI Search Index

Replayable & Auditable Pipeline

Supports backfills, debugging, compliance audits, and recovery

Resilient External Ingestion

Mirth Connect handles variable pharmacy claim formats

Zero Downtime Modernization

Enabled AI analytics and new digital features without touching legacy COBOL

Technology Stack

Security & Compliance

TLS 1.3 across all connections; KMS-managed encryption for all storage

IAM roles per component

All data movement logged via CloudTrail, VPC Flow Logs, Kafka audit logs

Private subnets for Kafka, RDS, Redshift, OpenSearch

HIPAA (with BAA), SOC 2 Type II, NIST SP 800-53

Results & Impact

Data Latency

<0

<90 seconds from COBOL commit to final destination

Data Volume

0

10k-50k events/hour

Search Capability

Full-text search on previously inaccessible legacy data

Analytics

Real-time reporting via Redshift and AI-powered dashboards

Reliability

EFS staging + SQS decoupling + Kafka replayability

Have a Similar Challenge?

We'd love to hear about your project and explore how we can help.