AWS EKS Migration & AI-Ready Microservices Modernization

Healthcare Technology Organization

Overview

Unified a production-grade AWS workload running across Lambda, EC2, and ECS into a single Amazon EKS cluster — modernizing the architecture into independently deployable microservices with AI/ML-ready infrastructure, dynamic auto-scaling, and zero-downtime deployments.

Client Profile

The Challenge

Mixed compute services (Lambda, EC2, ECS) making scaling and cost optimization challenging

Long-term maintainability concerns with fragmented architecture

Need to support future AI/ML workloads requiring specialized compute resources

Model serving infrastructure requirements for AI/ML pipelines

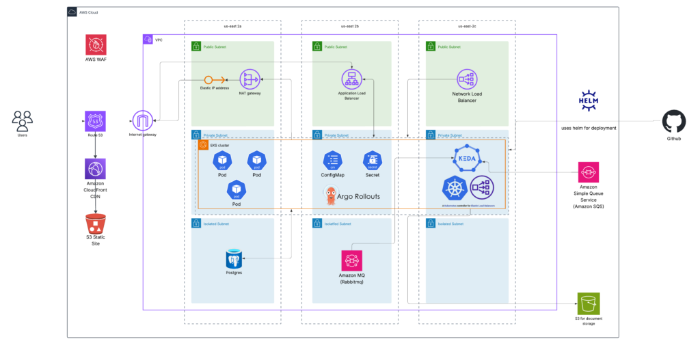

Solution Architecture

Cluster Architecture: Managed EKS Control Plane (AWS-managed) with Multi-AZ Node Groups — Base Group (On-Demand) for critical system components and Dynamic Group (Karpenter) for auto-provisioning. ARM (Graviton) instances for ~40% cost savings; Spot instances for non-prod.

Migration & Containerization: Converted Lambda functions into RESTful API-based microservices. Refactored EC2/ECS apps into independent microservices. Standardized Dockerfiles for all services. AI/ML-ready infrastructure with KEDA for event-driven scaling.

CI/CD Pipeline: GitHub Actions > CodeBuild > Docker images > ECR > Helm Upgrade > Argo Rollouts with Canary Analysis.

Architecture Diagram — AWS EKS Migration & Microservices Modernization

Features & Capabilities

Unified Orchestration Platform

All workloads under single Amazon EKS cluster

Microservices Modernization

Monolithic/serverless functions converted to independent containerized services

Dynamic Auto-Scaling

KEDA for event-driven scaling (HTTP, RabbitMQ, SQS)

Cost-Optimized Compute

On-Demand (ARM/x86) + Spot; Karpenter dynamic provisioning

Zero-Downtime Deployments

Argo Rollouts with Blue-Green and automated canary analysis

AI/ML-Ready Infrastructure

Foundation for model serving, training pipelines, and inference workloads

Security Hardening

GuardDuty for runtime threat detection; Pod Disruption Budgets for availability

Standardized Service Templates

Helm charts enforcing consistency

Technology Stack

Security & Compliance

IRSA (pods assume IAM roles); Least-Privilege RBAC per service

GuardDuty Integration; Pod Security Standards

VPC Segmentation; Private Clusters

HIPAA, SOC 2, ISO 27001

Results & Impact

Workload Unification

All Lambda, EC2, ECS consolidated under EKS

Auto-Scaling

Independent scaling per microservice using KEDA

Resilience

Zero-downtime deployments with PDBs and graceful shutdowns

Cost Efficiency

Hybrid Spot + On-Demand + Graviton delivering significant savings

AI/ML Readiness

Foundation for model serving, training, and inference workloads

Have a Similar Challenge?

We'd love to hear about your project and explore how we can help.