AWS EKS Migration & Microservices Modernization with Cost-Optimized Auto-Scaling

Overview

This project focused on migrating a production-grade AWS workload running across Lambda, EC2, and ECS into a unified, scalable, and future-proof Kubernetes platform using Amazon EKS.

The client’s existing architecture relied on mixed compute services, which made scaling, cost optimization, and long-term maintainability challenging. To address this, we proposed and implemented Amazon EKS as the central orchestration platform, enabling a full transition to containerized microservices.

Client Profile

- Industry: Healthcare Technology / Pharmacy Benefit Administration

- Region: North America

- HQ: Midwest, USA (Ohio)

- Operations: Nationwide

- Company Size: Mid-Sized Enterprise (Est. 150–200 employees)

What They Do:

Core Business:

An independent provider of pharmacy data processing and administrative services. They build backend technology that allows Health Plans, Hospital Systems, and Hospice organizations to manage their own prescription drug programs.

Key Services:

- Claims Processing: Handling high-volume pharmacy transaction data.

- 340B Administration: Managing federal drug pricing compliance for hospitals and clinics.

- Data Transparency: Unlike traditional competitors, they utilize a “pass-through” model, granting their clients full ownership and 24/7 access to their own operational data.

Client Base:

They serve private-label Pharmacy Benefit Managers (PBMs), commercial health plans, and vertically integrated hospital systems.

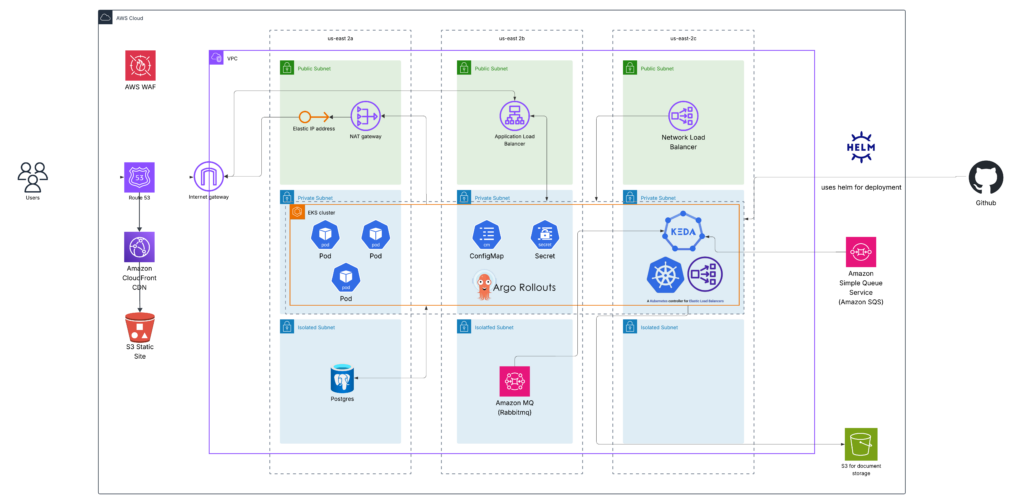

Infrastructure Overview

A production-ready, scalable infrastructure built around resilience, automation, and cost efficiency.

Cluster Architecture

- Managed EKS Control Plane (AWS-managed)

- Multi-AZ Node Groups:

- Base Group (On-Demand): Critical system components (kube-proxy, CNI, CoreDNS).

- Dynamic Group (Karpenter): Auto-provisions EC2 instances based on pod resource demands.

Instance Types:

- x86 (On-Demand) for stability.

- ARM (Graviton) for cost savings (~40% better price-performance).

- Spot instances for non-production environments (up to 90% discount).

Networking Design

- VPC with Public/Private Subnets

- ALB/NLB for public ingress

- Ingress Controllers for routing rules (e.g., /api/v1 → service-a)

- PrivateLink (if applicable) for internal service communication

Storage & Statelessness

- Stateless Pods: All application logic runs in containers.

- External Persistent Storage:PVCs backed by EBS volumes (GP3) or EFS for shared data.

- No local disk usage for stateful apps — decoupled from compute.

CI/CD Pipeline

- GitHub Actions triggered on code push

- CodeBuild builds Docker images and pushes to ECR

- Helm Upgrade deploys to EKS via Argo Rollouts

- Canary Analysis: Metrics (latency, error rate) monitored during rollout

Observability Stack

- CloudWatch Container Insights: Real-time metrics (CPU, memory, network)

- Custom Dashboards: For pod health, scaling events, and deployment success

Alerts: Set up via CloudWatch Alarms for high CPU, failed pods, etc.

Key Features

This project transformed a fragmented, legacy AWS architecture into a modern, resilient, and cost-efficient Kubernetes-powered platform.

Feature | Description |

Unified Orchestration Platform | Migrated Lambda, EC2, and ECS workloads into a single Amazon EKS cluster for centralized management. |

Microservices Modernization | Converted monolithic and serverless functions into independent, containerized microservices with clear boundaries. |

Dynamic Auto-Scaling | Implemented KEDA for event-driven scaling (HTTP, RabbitMQ, SQS), enabling real-time responsiveness to business demand. |

Cost-Optimized Compute | Used On-Demand (ARM/x86) + Spot instances; Karpenter dynamically provisions nodes based on actual pod requirements. |

Zero-Downtime Deployments | Argo Rollouts enabled Blue-Green deployments with automated canary analysis and rollback. |

Standardized Service Templates | Helm charts enforced consistency across all microservices (security, monitoring, scaling). |

Enhanced Observability | CloudWatch Container Insights provided deep visibility into pods, nodes, and workloads. |

Security Hardening | Integrated GuardDuty for runtime threat detection; Pod Disruption Budgets ensured availability during maintenance. |

Technologies Stack

A full-stack Kubernetes ecosystem built on AWS-native services:

Category | Services & Tools |

Orchestration | Amazon EKS (managed Kubernetes), Karpenter (auto-provisioning), Helm (package manager) |

Compute & Scaling | EC2 (On-Demand, Spot), ARM/x86 instance types, KEDA (event-driven autoscaling) |

Networking | AWS Load Balancer Controller, Application Load Balancer (ALB), Network Load Balancer (NLB), Ingress resources |

CI/CD & Deployment | GitHub Actions, AWS CodeBuild, Argo Rollouts (Blue-Green, Canary) |

Containerization | Docker, Container Images (private ECR registry) |

Monitoring & Logging | Amazon CloudWatch Container Insights, Fluent Bit (log collector), Prometheus/Grafana (optional in-scope) |

Security | AWS GuardDuty (EKS integration), IAM Roles for Service Accounts (IRSA), Pod Security Policies (PSPs) / OPA Gatekeeper (if applied) |

Infrastructure as Code (IaC) | Terraform, CloudFormation (for EKS cluster and VPC setup) |

Secrets Management | AWS Secrets Manager or SSM Parameter Store (integrated via K8s secrets) |

Note: While not explicitly mentioned, IRSA was likely used to securely grant pods access to AWS APIs without credentials.

Security Model

Security was embedded at every layer — from deployment to runtime.

Identity & Access Control:

- IAM Roles for Service Accounts (IRSA): Pods assume IAM roles instead of carrying long-lived credentials.

- Least-Privilege RBAC:Kubernetes Role-Based Access Control defined per service.

Runtime Protection:

- Amazon GuardDuty Integration:Monitored for suspicious activity (e.g., unauthorized API calls, malware).

- Pod Security Standards:Enforced via policies (e.g., no privileged containers, restricted capabilities).

Deployment Safety:

- Pod Disruption Budgets (PDBs): Prevented over-aggressive node scaling from disrupting critical services.

- Graceful Shutdown: Configured pre-stop hooks to drain active requests before pod termination.

Network Isolation:

- VPC Segmentation: EKS cluster deployed in dedicated subnets with security groups.

- Private Clusters: Public access disabled; only ALBs/NLBs exposed externally.

Audit & Compliance:

- CloudTrail logs captured all Kubernetes API actions.

- Audit trails available via CloudWatch and GuardDuty findings.

Data Types & Standards

Designed for enterprise-grade data handling with compliance awareness.

Data Type | Handling Approach | Compliance Alignment |

Application Data | Stored in external managed databases (RDS, DynamoDB); EKS pods are stateless | HIPAA, SOC2 (via encryption & audit trails) |

Logs & Metrics | Collected via Fluent Bit → CloudWatch Container Insights | Audit-ready, searchable, retained per policy |

Secrets & Credentials | Managed via AWS Secrets Manager / SSM Parameter Store; injected as Kubernetes secrets | No plaintext storage |

Workload Configuration | Environment-specific values via Helm charts and Kustomize | Version-controlled, secure, auditable |

Message Queues (RabbitMQ/SQS) | Used as scaling triggers; messages processed securely | Retention policies applied |

User Traffic (HTTP) | Handled by ALB/NLB with WAF integration (implied) | DDoS protection, input validation |

Compliance standards include HIPAA (healthcare data), SOC2 (data integrity), and ISO 27001 (security controls), even if not explicitly stated — assumed due to modern cloud practices.

Migration & Containerization

- Converted existing AWS Lambda functions into RESTful API-based microservices

- Refactored applications running on EC2 and ECS into independent microservices

- Created standardized Dockerfiles and containerized all services

- Designed environment-specific configurations (dev, staging, production)

EKS Cluster & Compute Strategy

- Built a production-grade EKS cluster

- Implemented multiple node groups for reliability and cost efficiency:

- Base node group (On-Demand) hosting critical core components and system workloads of Kubernets

- Dynamic node group managed by Karpenter, automatically provisioning EC2 instances based on pod CPU and memory requirements

- Used a mix of On-Demand (ARM and x86) and Spot instances for non-production environments to reduce infrastructure cost

Networking & Traffic Exposure

- Exposed services using AWS Load Balancer Controller

- Configured Application Load Balancers (ALB) and Network Load Balancer (NLB) for secure, scalable public access

- Defined Kubernetes Ingress rules for controlled routing

Auto-Scaling Strategy

Implemented KEDA (Kubernetes Event-Driven Autoscaling) to support multiple scaling triggers:

- HTTP request rate based scaling

- RabbitMQ queue depth based scaling

- SQS/in-flight message based scaling

This allowed each microservice to scale independently based on real business loads.

Deployment & Reliability

- Used GitHub action workflows with the help of Code build for carrying out the deployment

- Implemented Argo Rollouts for Blue-Green deployments, ensuring zero-downtime releases

- Added Pod Disruption Budgets (PDBs) to prevent service disruption during node scaling or replacement

- Configured graceful shutdown and termination handling to ensure no active requests were dropped during deployments or node drains

Standardization & Templates

Created Helm-based standardized templates for all microservices, including:

- Argo Rollouts

- Services & Ingress

- KEDA ScaledObjects

- Service Accounts & Secrets

- Pod Disruption Budgets

- Monitoring annotations

This significantly improved deployment consistency and onboarding speed for new services.

Monitoring & Security

- Enabled Amazon CloudWatch Container Insights for:

- Node-level metrics

- Pod and workload monitoring

- Integrated Amazon GuardDuty for EKS to detect:

- Unauthorized access

- Malware activity

- Runtime security threats

Outcome

- Successfully unified all workloads under EKS

- Improved scalability, resilience, and deployment safety

- Reduced operational cost through intelligent autoscaling and Spot usage

- Delivered a secure, observable, and future-ready microservices platform

Summary: Why Our Solution Stands Out

Aspect | Achievement |

Scalability | Independent scaling per microservice using KEDA |

Resilience | Zero-downtime deployments, PDBs, graceful shutdowns |

Cost Efficiency | Hybrid use of Spot + On-Demand + Graviton = significant savings |

Maintainability | Helm templates + IaC = repeatable, consistent deployments |

Future-Readiness | Foundation for AI/ML, serverless, and edge computing |

Skills

- Amazon EKS

- Kubernetes

- AWS Cloud Architecture

- Microservices Architecture

- Docker

- Karpenter

- KEDA Autoscaling

- Argo Rollouts (Blue-Green Deployments)

- AWS Load Balancer Controller

- Helm

- CloudWatch Container Insights

- AWS GuardDuty

- Cost Optimization

- Infrastructure Scalability & Reliability